Azure Data Factory (ADF) is pretty nice. Its actually two different things welded together:

- The Data Flow activity is Databricks running on Apache Spark

- The rest of ADF - the Copy Data activity for example - is Microsofts own cloud-ETL stuff

That means there's often two different ways of doing something. Also, both halves of ADF have expression languages which are similar but not quite the same. Nobody seems to talk about this much.

If you want a general intro to ADF I'd recommend Cathrine Wilhelmsen's Beginners Guide. However in this post I'm just going to specifically talk about the Salesforce Connector.

ADF has a Salesforce Connector which is also pretty good BUT if you are loading data into Salesforce and want to see row-by-row results, it really makes it difficult for you to access that information. Like, it seems to have gone out of its way to make the results much less accessible than they should be.

What do I mean by row-by-row results? Lets say you use the Salesforce Bulk API to insert 100 Contacts into Salesforce. For the rows that successfully import, you want to know the Id that Salesforce assigned to the new row. For the rows that fail, you want to know the error message, so that you can try and fix it and then try again. Both these bits of information (Id of successful rows, Error message of failed rows) are usually made available to you by the Bulk API.

But for some reason the ADF Salesforce connector makes that info very hard to get.

Skip Incompatible Rows Setting

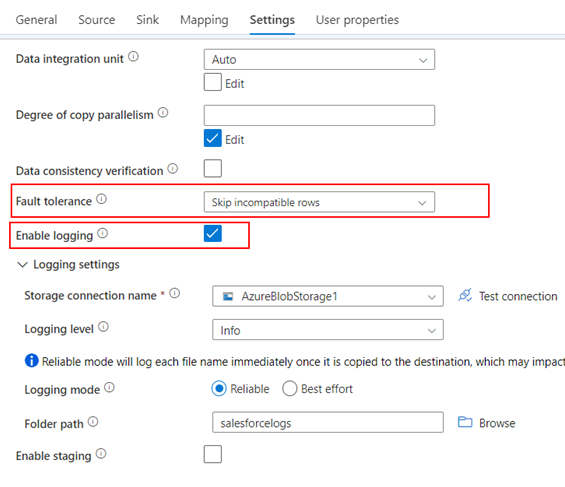

First, just some tips: When you're using the Salesforce Connector, by default the Copy Activity will just mark itself as 'failed' if any of the rows you are uploading to Salesforce fail. To get around that you need to select 'Skip Incompatible Rows' in the Settings/Fault Tolerance box:

This is essentially telling it "if some of the rows get rejected by Salesforce due to errors, log the errors but continue on"

You also need to check the 'Enable Logging' box. And you need to configure the logging settings below that so that ADF has somewhere to put the logs. So now if some of the rows fail, the Copy Activity as a whole will still mark itself as 'succeeded' and the failed rows will get logged, somewhere, somehow.

Finding the ADF Logs

Firstly, note that ADF will only log information about failed rows. If you want the Ids of the successful rows - which is normally part of what the Bulk API can give you - you're out of luck. You'll have to fetch the new rows yourself with another Copy Activity and then cross reference them with an External Id or alternate key.

The failed rows are logged in a CSV format in a nest of folders with GUID filenames. The pattern is:

copyactivity-logs/(Activity Name)/(Activity Run Id)/(some other guid).csv

In ADF you can get the path of the log file from the output of the activity, like this:

@activity('Name of the Copy Data Activity').output.logFilePath

Reading the ADF Logs

The ADF Log file will be a CSV file with the following columns:

- Timestamp

- Level (this will always say Warning)

- OperationName (this will always say TabularRowSkip)

- OperationItem

- Message

The last two are the ones to look at. Message gives you the error message - the reason why Salesforce rejected the row. OperationItem is the original CSV row of data that you sent, now nested inside this CSV. That's right, CSV data nested inside another CSV with all the speechmarks escaped.

So a typical ADF Log file from a Copy Data activity using the Salesforce Connector might look like this:

Timestamp,Level,OperationName,OperationItem,Message

2023-05-07 14:55:02.4835285,Warning,TabularRowSkip,"""CUS003476"",""Terry"",""Smith"",""tsmith_2968@gmail,com.test"",""+404-1313-503473"",","INVALID_EMAIL_ADDRESS:Email: invalid email address: tsmith_2968@gmail,com.test:Email --"

2023-05-07 14:55:02.4934923,Warning,TabularRowSkip,"""CUS007354"",""Laura"",,""laura_eccles_3754@yahoo.co.uk.test"",""+404-1923-683475"",","REQUIRED_FIELD_MISSING:Required fields are missing: [LastName]:LastName --"

From this we can see row CUS003476 failed because the email address has a comma in it, and row CUS007354 failed because the LastName is missing.

So now you have the error messages, but the problem is, to correlate the error messages back to your original full set of data, you have to parse the contents of the OperationItem column to find your External Id or alternate key, and the OperationItem field is an ungodly mass of escaped speechmarks.

I will have to leave that task as an exercise for the reader. I built a re-useable parameterised pipeline to find the log, extract the useful bits, and link the error back to the original SQL table, but it was for a client so can't share it here.